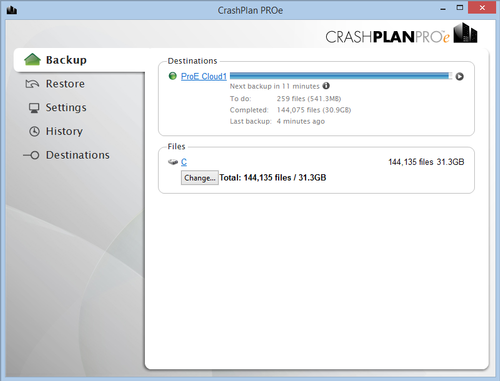

Measuring goodput at the interface, not application self-reporting. I’m averaging about 750 KB/s, compared to Crashplan at ~2 MB/s for never-before-seen content. It’s my choice of Backblaze B2 for storage, specifically. The key thing to keep in mind is that even with alphabetical or node ID-based sorting, there’s already a known problem with deduplication efficiency, that explicit sorting, such as size-based, shouldn’t make worse.Īlso in my experience so far, Duplicacy is objectively not faster than Crashplan - but not having anything to do with the software. (I’m aware that the branch might introduce other issues elsewhere in the code path.)Īlso, grouping by size should also mitigate the problem, but that would be less useful for general backup than by date/descending.

If fracai’s “chunk boundary” branch were merged - which ends chunks at the end of large files - then it would never be a problem, regardless of sorting algorithm. But that wouldn’t apply to all sorting options, and even then is only because Duplicacy groups multiple files into chunks. Yeah I get that about churn, as noted with “might make it worse if done by date”. Referenced PR #456 (“bundle or chunk” algorithm to solve that problem).Referenced discussion #334 (inefficient deduplication with moved/renamed files).Clarified reasons for needing newest-first priority.That single PR alone would likely save me thousands of dollars in cloud storage bills, over the long run. with this PR waiting to be merged to master “ Bundle or chunk #456”.

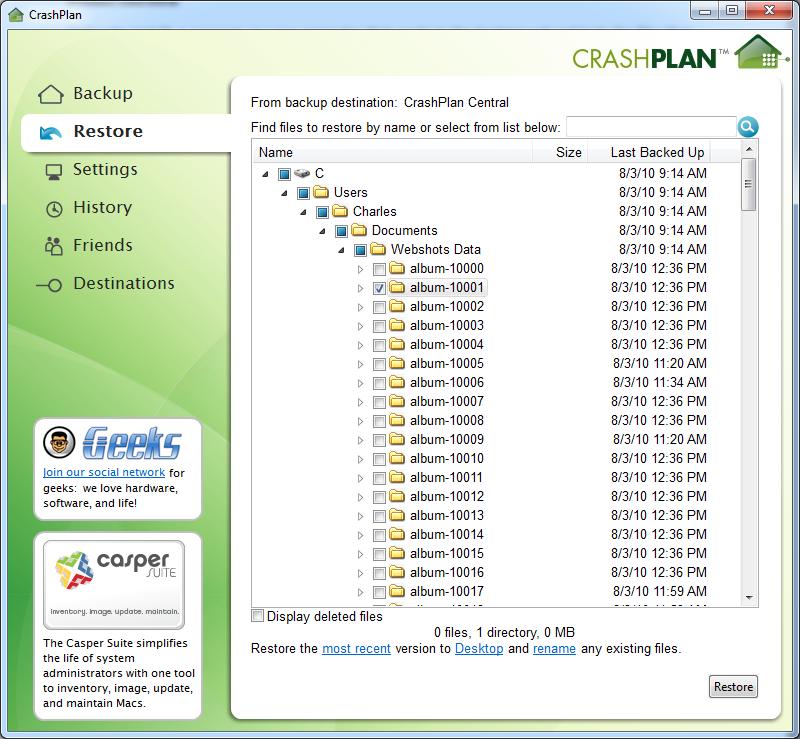

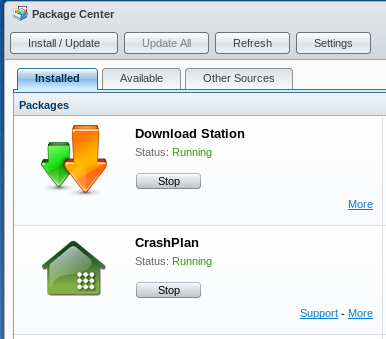

I realize that with the current chunking algorithm, sorting by newest first would likely cause problems for deduplication, resulting in an unnecessarily higher amount of data to upload and store (and pay for).īut that problem in turn wouldn’t exist - if the problems noted, acknowledged, and objectively measured in the discussion, “ Inefficient Storage after Moving or Renaming Files? #334”, were addressed - e.g. Which of course, makes the following problem worse. So as it is now, I have to resort to various tricks with mount -bind and filters, to approximate what I need. Most importantly: I can’t risk waiting 12-24 months for my newest stuff to get backed up to the cloud.My newer data is simply “more important” than older data, for various reasons, including taxes for example.Like slight variations used by many pros, I store my photos and videos in folders named YYYY/YYYYMMDD.).While I eventually want them all backed up to one place, there is increasily less urgency the older they get.The farther back in time you go in my dataset, mostly photos, the more ways the files are already backed up in increasingly multiple ways both locally and cloud.My backup set will take a year or two to complete to B2, assuming I can get it to go faster.Why? Consider my use-case and requirements for example, which aren’t unique: It would be fantastic, if the file backup priority was configurable! Even if with simple options, such as only one choice among: (Or by node ID? Or however the filesystem returns data according to whatever APIs you’re calling?) (Crashplan is supposedly a little more complex than that, also taking file size into account but obviously more weighted towards modified date.)ĭuplicacy seems to back up in alphabetical path order. It wasn’t configurable, but that’s what I wanted anyway. And before Crashplan, some other popular backup products which I don’t even remember.Ĭrashplan and the others backed up newest-files-first. I used Crashplan since they started, and recently switched to Duplicacy (after a months-long research and evaluation phase).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed